Machine Learning Successes

Machine learning does an impressive job of correlating sense data with classification tags. It is strongest when the training data and execution data are tightly aligned, and when sense data is relatively consistent.

Organizations are trying to operationalize AIML for more purposes than ever before:

Tightly integrated AIML has been successful for solving problems in single domains.

But this same integration makes it hard to coordinate between different AIML applications across multiple domains. Resulting in system behavior that has less confidence, less adaptability and less robustness than the behavior of individual algorithms. The challenge is how to ensure that individual ML efforts are relying on the same background knowledge, how to ensure that this background knowledge gets updated appropriately and how to coordinate the training and deployment of different AIML algorithms based on that knowledge

Machine learning does an impressive job of correlating sense data with classification tags. It is strongest when the training data and execution data are tightly aligned, and when sense data is relatively consistent.

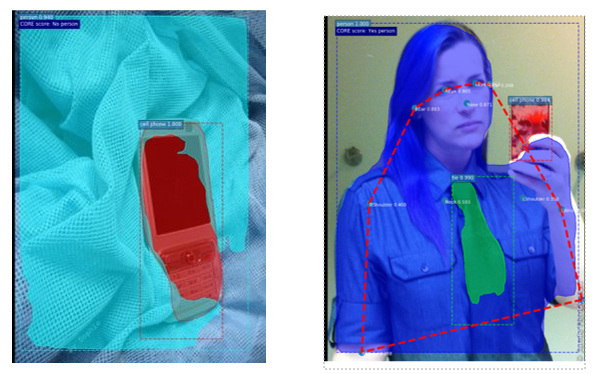

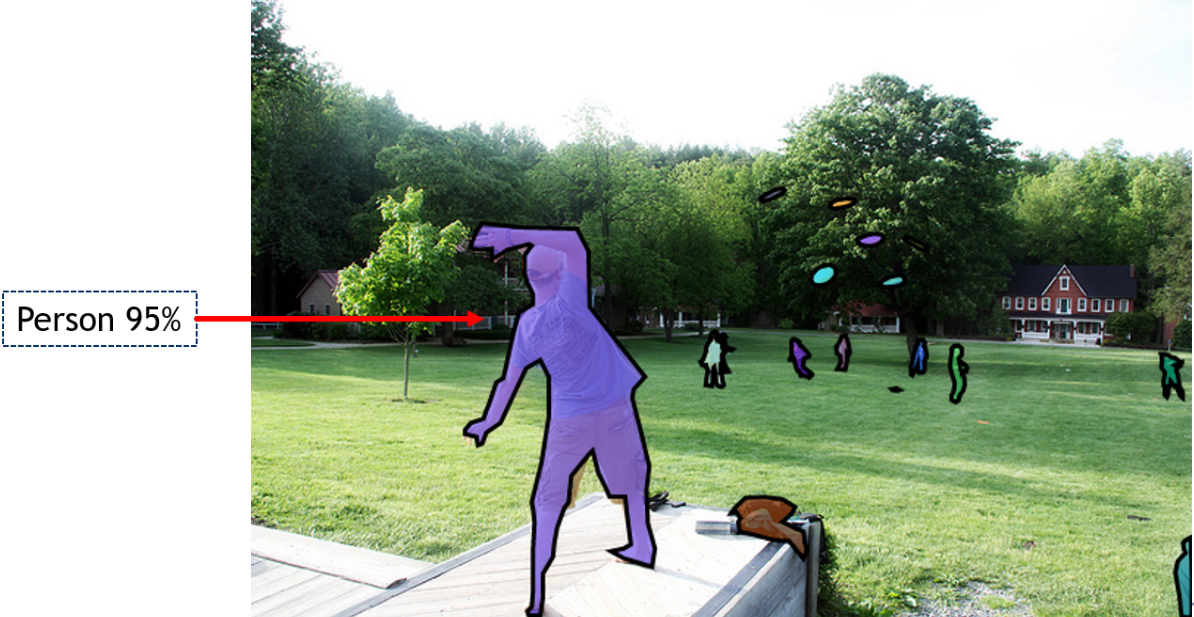

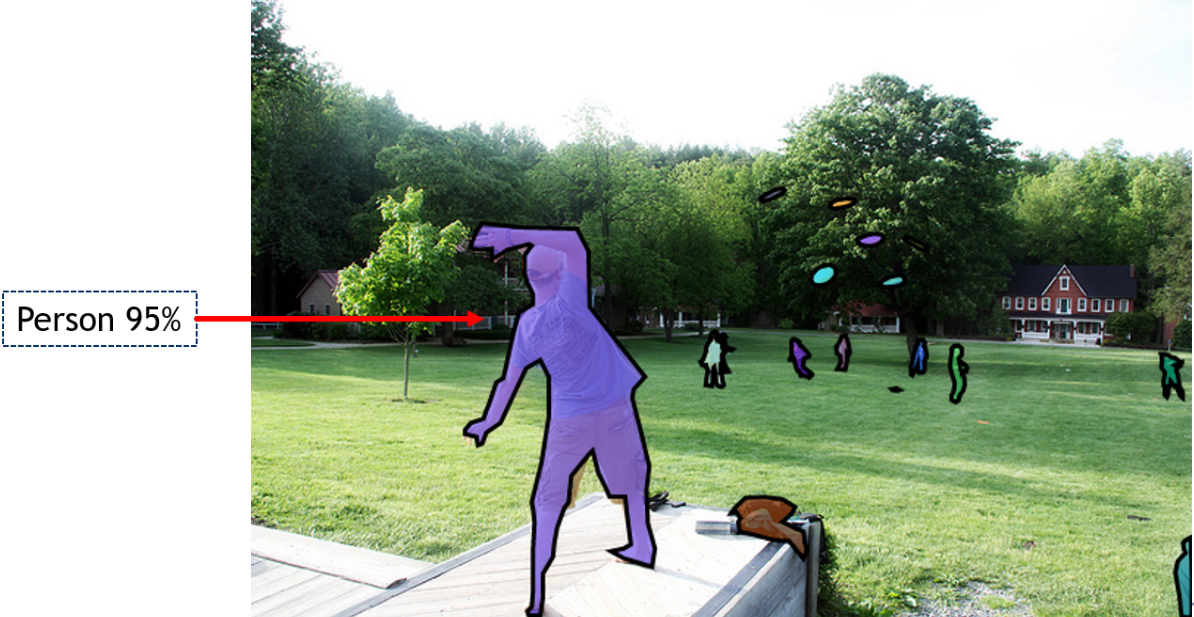

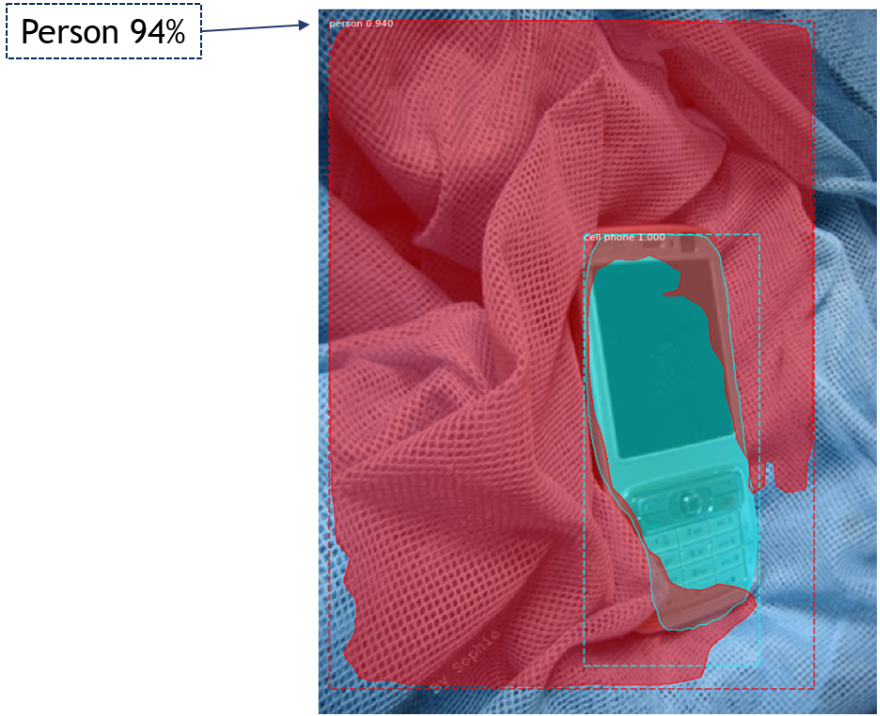

Often when it does fail, it does so in ways that even domain novices would not. As in this example where the algorithm identifies a piece of cloth as a person. A child would not make this mistake.

Even simple Cross-Validation can catch many of this kind of mistake. In fact, this kind of control layer validation simplifies the deployment and maintenance of ML while also improving outcome confidence. In this example we have used the simple truth that people have people parts to build a cross validation routine. Now the cloth is not identified as person because there are no people parts found. While the a person is still identified because they do have people parts.